(source)

(source)

|

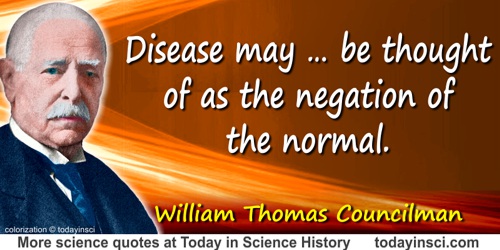

William Thomas Councilman

(1 Jan 1854 - 26 May 1933)

American pathologist who did research on diphtheria, cerebrospinal meningitis, nephritis, and smallpox. He was the principal founder of the American Association of Pathologists and Bacteriologists.

|

Science Quotes by William Thomas Councilman (11 quotes)

[For the] increase of knowledge and … the useful application of the knowledge gained, … there never is a sudden beginning; even the cloud change which portends the thunderstorm begins slowly.

— William Thomas Councilman

From address, 'A Medical Retrospect'. Published in Yale Medical Journal (Oct 1910), 17, No. 2, 59.

Disease may … be thought of as the negation of the normal.

— William Thomas Councilman

In Disease and its Causes (1913), 10.

Disease may be defined as “A change produced in living things in consequence of which they are no longer in harmony with their environment.”

— William Thomas Councilman

In Disease and its Causes (1913), 9.

Had there not been in zoology men who devoted themselves to such seemingly unimportant studies as the differentiation of the species of mosquitoes, we should not have been able to place on a firm foundation the aetiology of malaria and yellow fever.

— William Thomas Councilman

From address, 'A Medical Retrospect'. Published in Yale Medical Journal (Oct 1910), 17, No. 2, 65.

How much is our knowledge of bacteria due to the discovery of the aniline dyes on the one hand and the discovery by Weigert that bacteria had a selective affinity for certain of these?

— William Thomas Councilman

From address, 'A Medical Retrospect'. Published in Yale Medical Journal (Oct 1910), 17, No. 2, 61.

In the past we see that periods of great intellectual activity have followed certain events which have acted by freeing the mind from dogma, extending the domain in which knowledge can be sought, and stimulating the imagination. … [For example,] the development of the cell theory and the theory of evolution.

— William Thomas Councilman

From address, 'A Medical Retrospect'. Published in Yale Medical Journal (Oct 1910), 17, No. 2, 59.

It is an important thing that people be happy in their work, and if work does not bring happiness there is something wrong

— William Thomas Councilman

Quoted as the notations of an unnamed student in the auditorium when Councilman made impromptu biographical remarks at his last lecture as a teacher of undergraduates in medicine (19 Dec 1921). As quoted in obituary, 'William Thomas Councilman', by Harvey Cushing, Science (30 Jun 1933), 77, No. 2009, 613-618. Reprinted in National Academy Biographical Memoirs, Vol. 18, 159-160. The transcribed lecture was published privately in a 23-page booklet, A Lecture Delivered to the Second-Year Class of the Harvard Medical School at the Conclusion of the Course in Pathology, Dec. 19, 1921.

The earliest of my childhood recollections is being taken by my grandfather when he set out in the first warm days of early spring with a grubbing hoe (we called it a mattock) on his shoulder to seek the plants, the barks and roots from which the spring medicine for the household was prepared. If I could but remember all that went into that mysterious decoction and the exact method of preparation, and with judicious advertisement put the product upon the market, I would shortly be possessed of wealth which might be made to serve the useful purpose of increasing the salaries of all pathologists. … But, alas! I remember only that the basic ingredients were dogwood bark and sassafras root, and to these were added q.s. bloodroot, poke and yellow dock. That the medicine benefited my grandfather I have every reason to believe, for he was a hale, strong old man, firm in body and mind until the infection came against which even spring medicine was of no avail. That the medicine did me good I well know, for I can see before me even now the green on the south hillside of the old pasture, the sunlight in the strip of wood where the dogwood grew, the bright blossoms and the delicate pale green of the leaf of the sanguinaria, and the even lighter green of the tender buds of the sassafras in the hedgerow, and it is good to have such pictures deeply engraved in the memory.

— William Thomas Councilman

From address, 'A Medical Retrospect'. Published in Yale Medical Journal (Oct 1910), 17, No. 2, 57. [Note: q.s. in an abbreviation for quantum sufficit meaning “as much as is sufficient,” when used as a quantity specification in medicine and pharmacology. -Webmaster]

There is no force inherent in living matter, no vital force independent of and differing from the cosmic forces; the energy which living matter gives off is counterbalanced by the energy which it receives.

— William Thomas Councilman

In Disease and its Causes (1913), 10-11.

Traditions may be very important, but they can be extremely hampering as well, and whether or not tradition is of really much value I have never been certain. Of course when they are very fine, they do good, but it is very difficult of course ever to repeat the conditions under which good traditions are formed, so they may be and are often injurious and I think the greatest progress is made outside of traditions.

— William Thomas Councilman

Quoted as the notations of an unnamed student in the auditorium when Councilman made impromptu biographical remarks at his last lecture as a teacher of undergraduates in medicine (19 Dec 1921). As quoted in obituary, 'William Thomas Councilman', by Harvey Cushing, Science (30 Jun 1933), 77, No. 2009, 613-618. Reprinted in National Academy Biographical Memoirs, Vol. 18, 159-160. The transcribed lecture was published privately in a 23-page booklet, A Lecture Delivered to the Second-Year Class of the Harvard Medical School at the Conclusion of the Course in Pathology, Dec. 19, 1921.

With advancing years new impressions do not enter so rapidly, nor are they so hospitably received… There is a gradual diminution of the opportunities for age to acquire fresh knowledge. A tree grows old not by loss of the vitality of the cambium, but by the gradual increase of the wood, the non-vital tissue, which so easily falls a prey to decay.

— William Thomas Councilman

From address, 'A Medical Retrospect'. Published in Yale Medical Journal (Oct 1910), 17, No. 2, 59. The context is that he is reflecting on how in later years of life, a person tends to give priority to long-learned experience, rather than give attention to new points of view.

See also:

- 1 Jan - short biography, births, deaths and events on date of Councilman's birth.

In science it often happens that scientists say, 'You know that's a really good argument; my position is mistaken,' and then they would actually change their minds and you never hear that old view from them again. They really do it. It doesn't happen as often as it should, because scientists are human and change is sometimes painful. But it happens every day. I cannot recall the last time something like that happened in politics or religion.

(1987) --

In science it often happens that scientists say, 'You know that's a really good argument; my position is mistaken,' and then they would actually change their minds and you never hear that old view from them again. They really do it. It doesn't happen as often as it should, because scientists are human and change is sometimes painful. But it happens every day. I cannot recall the last time something like that happened in politics or religion.

(1987) --