Instrument Quotes (161 quotes)

[Engineers are] the direct and necessary instrument of coalition by which alone the new social order can commence.

[Microscopic] evidence cannot be presented ad populum. What is seen with the microscope depends not only upon the instrument and the rock-section, but also upon the brain behind the eye of the observer. Each of us looks at a section with the accumulated experience of his past study. Hence the veteran cannot make the novice see with his eyes; so that what carries conviction to the one may make no appeal to the other. This fact does not always seem to be sufficiently recognized by geologists at large.

'The Anniversary Address of the President', Quarterly Journal of the Geological Society of London, 1885, 41, 59.

[Modern science] passed through a long period of uncertainty and inconclusive experiment, but as the instrumental aids to research improved, and the results of observation accumulated, phantoms of the imagination were exorcised, idols of the cave were shattered, trustworthy materials were obtained for logical treatment, and hypotheses by long and careful trial were converted into theories.

In The Present Relations of Science and Religion (1913, 2004), 3

[The infinitely small] neither have nor can have theory; it is a dangerous instrument in the hands of beginners [ ... ] anticipating, for my part, the judgement of posterity, I would dare predict that this method will be accused one day, and rightly, of having retarded the progress of the mathematical sciences.

Annales des Mathematiques Pures et Appliquées (1814-5), 5, 148.

Les mathématique sont un triple. Elles doivent fournir un instrument pour l'étude de la nature. Mais ce n'est pas tout: elles ont un but philosophique et, j'ose le dire, un but esthétique.

Mathematics has a threefold purpose. It must provide an instrument for the study of nature. But this is not all: it has a philosophical purpose, and, I daresay, an aesthetic purpose.

Mathematics has a threefold purpose. It must provide an instrument for the study of nature. But this is not all: it has a philosophical purpose, and, I daresay, an aesthetic purpose.

La valeur de la science. In Anton Bovier, Statistical Mechanics of Disordered Systems (2006), 161.

Question: State what are the conditions favourable for the formation of dew. Describe an instrument for determining the dew point, and the method of using it.

Answer: This is easily proved from question 1. A body of gas as it ascends expands, cools, and deposits moisture; so if you walk up a hill the body of gas inside you expands, gives its heat to you, and deposits its moisture in the form of dew or common sweat. Hence these are the favourable conditions; and moreover it explains why you get warm by ascending a hill, in opposition to the well-known law of the Conservation of Energy.

Answer: This is easily proved from question 1. A body of gas as it ascends expands, cools, and deposits moisture; so if you walk up a hill the body of gas inside you expands, gives its heat to you, and deposits its moisture in the form of dew or common sweat. Hence these are the favourable conditions; and moreover it explains why you get warm by ascending a hill, in opposition to the well-known law of the Conservation of Energy.

Genuine student answer* to an Acoustics, Light and Heat paper (1880), Science and Art Department, South Kensington, London, collected by Prof. Oliver Lodge. Quoted in Henry B. Wheatley, Literary Blunders (1893), 179, Question 12. (*From a collection in which Answers are not given verbatim et literatim, and some instances may combine several students' blunders.)

Theories thus become instruments, not answers to enigmas, in which we can rest. We don’t lie back upon them, we move forward, and, on occasion, make nature over again by their aid.

Pragmatism, a New Name for Some Old Ways of Thinking: Popular Lectures on Philosophy (1907), 53.

1. Universal CHEMISTRY is the Art of resolving mixt, compound, or aggregate Bodies into their Principles; and of composing such Bodies from those Principles. 2. It has for its Subject all the mix’d, compound, and aggregate Bodies that are and resolvable and combinable and Resolution and Combination, or Destruction and Generation, for its Object. 3. Its Means in general, are either remote or immediate; that is, either Instruments or the Operations themselves. 4. Its End is either philosophical and theoretical; or medicinal, mechanical, œconomical, and practical. 5. Its efficient Cause is the Chemist.

In Philosophical Principles of Universal Chemistry: Or, The Foundation of a Scientifical Manner of Inquiring Into and Preparing the Natural and Artificial Bodies for the Uses of Life: Both in the Smaller Way of Experiment, and the Larger Way of Business (1730), 1. Footnote to (1.): “The justness of this Definition will appear from the scope and tenour of the Work; though it is rather adapted to the perfect, than the present imperfect state of Chemistry….” Footnote to (4): “Hence universal Chemistry is commodiously resolved into several Parts or Branches, under which it must be distinctly treated to give a just notion of its due extent and usefulness. For tho’ in common acceptation of the word, Chemistry is supposed to relate chiefly to the Art of Medicine, as it supplies that Art with Remedies, this in reality is but a very small part of its use, compared with the rest; numerous other Arts, Trades, and mechanical Employments, Merchandize itself, and all natural Philosophy, being as much, and some of them more, concern’d therewith….”

A bird is an instrument working according to mathematical law, which instrument it is within the capacity of man to reproduce with all its movements, but not with a corresponding degree of strength, though it is deficient only in the power of maintaining equilibrium. We may therefore say that such an instrument constructed by man is lacking in nothing except the life of the bird, and this life must needs be supplied from that of man.

'Of the Bird's Movement' from Codice Atlantico 161 r.a., in Leonardo da Vinci's Notebooks, trans. E. MacCurdy (1906), Vol. 1, 153.

A man cannot be professor of zoölogy on one day and of chemistry on the next, and do good work in both. As in a concert all are musicians,—one plays one instrument, and one another, but none all in perfection.

Lecture at a teaching laboratory on Penikese Island, Buzzard's Bay. Quoted from the lecture notes by David Starr Jordan, Science Sketches (1911), 146.

A student who wishes now-a-days to study geometry by dividing it sharply from analysis, without taking account of the progress which the latter has made and is making, that student no matter how great his genius, will never be a whole geometer. He will not possess those powerful instruments of research which modern analysis puts into the hands of modern geometry. He will remain ignorant of many geometrical results which are to be found, perhaps implicitly, in the writings of the analyst. And not only will he be unable to use them in his own researches, but he will probably toil to discover them himself, and, as happens very often, he will publish them as new, when really he has only rediscovered them.

From 'On Some Recent Tendencies in Geometrical Investigations', Rivista di Matematica (1891), 43. In Bulletin American Mathematical Society (1904), 443.

About ten months ago [1609] a report reached my ears that a certain Fleming [Hans Lippershey] had constructed a spyglass, by means of which visible objects, though very distant from the eye of the observer, were distinctly seen as if nearby... Of this truly remarkable effect several experiences were related, to which some persons gave credence while others denied them. A few days later the report was confirmed to me in a letter from a noble Frenchman at Paris, Jacques Badovere, which caused me to apply myself wholeheartedly to enquire into the means by which I might arrive at the invention of a similar instrument. This I did shortly afterwards, my basis being the theory of refraction. First I prepared a tube of lead, at the ends of which I fitted two glass lenses, both plane on one side while on the other side one was spherically convex and the other concave.

The Starry Messenger (1610), trans. Stillman Drake, Discoveries and Opinions of Galileo (1957), 28-9.

All the properties that we designate as activity of the soul, are only the functions of the cerebral substance, and to express ourselves in a coarser way, thought is just about to the brain what bile is to the liver and urine to the kidney. It is absurd to admit an independent soul who uses the cerebellum as an instrument with which he would work as he pleases.

As quoted in William Vogt, La Vie d'un Homme, Carl Vogt (1896), 48. Translated by Webmaster, from the original French, “Toutes les propriétés que nous designons sous le nom d’activité de l’âme, ne sont que les fonctions de la substance cérébrale, et pour nous exprimer d’ une façon plus grossière, la pensée est à peu près au cerveau ce que la bile est au foie et l’urine au rein. Il est absurde d’ admettre une âme indépendante qui se serve du cervelet comme d’un instrument avec lequelle travaillerait comme il lui plait.”

Always preoccupied with his profound researches, the great Newton showed in the ordinary-affairs of life an absence of mind which has become proverbial. It is related that one day, wishing to find the number of seconds necessary for the boiling of an egg, he perceived, after waiting a minute, that he held the egg in his hand, and had placed his seconds watch (an instrument of great value on account of its mathematical precision) to boil!

This absence of mind reminds one of the mathematician Ampere, who one day, as he was going to his course of lectures, noticed a little pebble on the road; he picked it up, and examined with admiration the mottled veins. All at once the lecture which he ought to be attending to returned to his mind; he drew out his watch; perceiving that the hour approached, he hastily doubled his pace, carefully placed the pebble in his pocket, and threw his watch over the parapet of the Pont des Arts.

This absence of mind reminds one of the mathematician Ampere, who one day, as he was going to his course of lectures, noticed a little pebble on the road; he picked it up, and examined with admiration the mottled veins. All at once the lecture which he ought to be attending to returned to his mind; he drew out his watch; perceiving that the hour approached, he hastily doubled his pace, carefully placed the pebble in his pocket, and threw his watch over the parapet of the Pont des Arts.

Popular Astronomy: a General Description of the Heavens (1884), translated by J. Ellard Gore, (1907), 93.

At the entrance to the observatory Stjerneborg located underground, Tycho Brahe built a Ionic portal. On top of this were three sculptured lions. On both sides were inscriptions and on the backside was a longer inscription in gold letters on a porfyr stone: Consecrated to the all-good, great God and Posterity. Tycho Brahe, Son of Otto, who realized that Astronomy, the oldest and most distinguished of all sciences, had indeed been studied for a long time and to a great extent, but still had not obtained sufficient firmness or had been purified of errors, in order to reform it and raise it to perfection, invented and with incredible labour, industry, and expenditure constructed various exact instruments suitable for all kinds of observations of the celestial bodies, and placed them partly in the neighbouring castle of Uraniborg, which was built for the same purpose, partly in these subterranean rooms for a more constant and useful application, and recommending, hallowing, and consecrating this very rare and costly treasure to you, you glorious Posterity, who will live for ever and ever, he, who has both begun and finished everything on this island, after erecting this monument, beseeches and adjures you that in honour of the eternal God, creator of the wonderful clockwork of the heavens, and for the propagation of the divine science and for the celebrity of the fatherland, you will constantly preserve it and not let it decay with old age or any other injury or be removed to any other place or in any way be molested, if for no other reason, at any rate out of reverence to the creator’s eye, which watches over the universe. Greetings to you who read this and act accordingly. Farewell!

(Translated from the original in Latin)

Bacon himself was very ignorant of all that had been done by mathematics; and, strange to say, he especially objected to astronomy being handed over to the mathematicians. Leverrier and Adams, calculating an unknown planet into a visible existence by enormous heaps of algebra, furnish the last comment of note on this specimen of the goodness of Bacon’s view… . Mathematics was beginning to be the great instrument of exact inquiry: Bacon threw the science aside, from ignorance, just at the time when his enormous sagacity, applied to knowledge, would have made him see the part it was to play. If Newton had taken Bacon for his master, not he, but somebody else, would have been Newton.

In Budget of Paradoxes (1872), 53-54.

BAROMETER, n. An ingenious instrument which indicates what kind of weather we are having.

The Collected Works of Ambrose Bierce (1911), Vol. 7, The Devil's Dictionary, 32.

Be not afeard.

The isle is full of noises,

Sounds, and sweet airs, that give delight and hurt not.

Sometimes a thousand twangling instruments

Will hum about mine ears; and sometime voices

That if I then had waked after long sleep

Will make me sleep again; and then, in dreaming

The clouds methought would open and show riches

Ready to drop upon me, that, when I waked,

I cried to dream again.

The isle is full of noises,

Sounds, and sweet airs, that give delight and hurt not.

Sometimes a thousand twangling instruments

Will hum about mine ears; and sometime voices

That if I then had waked after long sleep

Will make me sleep again; and then, in dreaming

The clouds methought would open and show riches

Ready to drop upon me, that, when I waked,

I cried to dream again.

The Tempest (1611), III, ii.

Briefly, in the act of composition, as an instrument there intervenes and is most potent, fire, flaming, fervid, hot; but in the very substance of the compound there intervenes, as an ingredient, as it is commonly called, as a material principle and as a constituent of the whole compound the material and principle of fire, not fire itself. This I was the first to call phlogiston.

Specimen Beccherianum (1703). Trans. J. R. Partington, A History of Chemistry (1961), Vol. 2, 668.

But many of our imaginations and investigations of nature are futile, especially when we see little living animals and see their legs and must judge the same to be ten thousand times thinner than a hair of my beard, and when I see animals living that are more than a hundred times smaller and am unable to observe any legs at all, I still conclude from their structure and the movements of their bodies that they do have legs... and therefore legs in proportion to their bodies, just as is the case with the larger animals upon which I can see legs... Taking this number to be about a hundred times smaller, we therefore find a million legs, all these together being as thick as a hair from my beard, and these legs, besides having the instruments for movement, must be provided with vessels to carry food.

Letter to N. Grew, 27 Sep 1678. In The Collected Letters of Antoni van Leeuwenhoek (1957), Vol. 2, 391.

But science is the great instrument of social change, all the greater because its object is not change but knowledge, and its silent appropriation of this dominant function, amid the din of political and religious strife, is the most vital of all the revolutions which have marked the development of modern civilisation.

Decadence: Henry Sidgwick Memorial Lecture (1908), 55-6.

By research in pure science I mean research made without any idea of application to industrial matters but solely with the view of extending our knowledge of the Laws of Nature. I will give just one example of the ‘utility’ of this kind of research, one that has been brought into great prominence by the War—I mean the use of X-rays in surgery. Now, not to speak of what is beyond money value, the saving of pain, or, it may be, the life of the wounded, and of bitter grief to those who loved them, the benefit which the state has derived from the restoration of so many to life and limb, able to render services which would otherwise have been lost, is almost incalculable. Now, how was this method discovered? It was not the result of a research in applied science starting to find an improved method of locating bullet wounds. This might have led to improved probes, but we cannot imagine it leading to the discovery of X-rays. No, this method is due to an investigation in pure science, made with the object of discovering what is the nature of Electricity. The experiments which led to this discovery seemed to be as remote from ‘humanistic interest’ —to use a much misappropriated word—as anything that could well be imagined. The apparatus consisted of glass vessels from which the last drops of air had been sucked, and which emitted a weird greenish light when stimulated by formidable looking instruments called induction coils. Near by, perhaps, were great coils of wire and iron built up into electro-magnets. I know well the impression it made on the average spectator, for I have been occupied in experiments of this kind nearly all my life, notwithstanding the advice, given in perfect good faith, by non-scientific visitors to the laboratory, to put that aside and spend my time on something useful.

In Speech made on behalf of a delegation from the Conjoint Board of Scientific Studies in 1916 to Lord Crewe, then Lord President of the Council. In George Paget Thomson, J. J. Thomson and the Cavendish Laboratory in His Day (1965), 167-8.

Call Archimedes from his buried tomb

Upon the plain of vanished Syracuse,

And feelingly the sage shall make report

How insecure, how baseless in itself,

Is the philosophy, whose sway depends

On mere material instruments—how weak

Those arts, and high inventions, if unpropped

By virtue.

Upon the plain of vanished Syracuse,

And feelingly the sage shall make report

How insecure, how baseless in itself,

Is the philosophy, whose sway depends

On mere material instruments—how weak

Those arts, and high inventions, if unpropped

By virtue.

In 'The Excursion', as quoted in review, 'The Excursion, Being a Portion of the Recluse, a Poem, The Edinburgh Review (Nov 1814), 24, No. 47, 26.

Chemistry is one of those branches of human knowledge which has built itself upon methods and instruments by which truth can presumably be determined. It has survived and grown because all its precepts and principles can be re-tested at any time and anywhere. So long as it remained the mysterious alchemy by which a few devotees, by devious and dubious means, presumed to change baser metals into gold, it did not flourish, but when it dealt with the fact that 56 g. of fine iron, when heated with 32 g. of flowers of sulfur, generated extra heat and gave exactly 88 g. of an entirely new substance, then additional steps could be taken by anyone. Scientific research in chemistry, since the birth of the balance and the thermometer, has been a steady growth of test and observation. It has disclosed a finite number of elementary reagents composing an infinite universe, and it is devoted to their inter-reaction for the benefit of mankind.

Address upon receiving the Perkin Medal Award, 'The Big Things in Chemistry', The Journal of Industrial and Engineering Chemistry (Feb 1921), 13, No. 2, 163.

Chymistry. … An art whereby sensible bodies contained in vessels … are so changed, by means of certain instruments, and principally fire, that their several powers and virtues are thereby discovered, with a view to philosophy or medicine.

An antiquated definition, as quoted in Samuel Johnson, entry for 'Chymistry' in Dictionary of the English Language (1785). Also in The Quarterly Journal of Science, Literature, and the Arts (1821), 284, wherein a letter writer (only identified as “C”) points out that this definition still appeared in the, then, latest Rev. Mr. Todd’s Edition of Johnson’s Dictionary, and that it showed “very little improvement of scientific words.” The letter included examples of better definitions by Black and by Davy. (See their pages on this website.)

Discovery follows discovery, each both raising and answering questions, each ending a long search, and each providing the new instruments for a new search.

In 'Prospects in the Arts and Sciences,' in Fifty Famous Essays (1964).

Elaborate apparatus plays an important part in the science of to-day, but I sometimes wonder if we are not inclined to forget that the most important instrument in research must always be the mind of man.

The Art of Scientific Investigation (1951), ix.

Even in Europe a change has sensibly taken place in the mind of man. Science has liberated the ideas of those who read and reflect, and the American example has kindled feelings of right in the people. An insurrection has consequently begun of science talents and courage against rank and birth, which have fallen into contempt. It has failed in its first effort, because the mobs of the cities, the instrument used for its accomplishment, debased by ignorance, poverty and vice, could not be restrained to rational action. But the world will soon recover from the panic of this first catastrophe.

Letter to John Adams (Monticello, 1813). In Thomas Jefferson and John P. Foley (ed.), The Jeffersonian Cyclopedia (1900), 49. From Paul Leicester Ford (ed.), The Writings of Thomas Jefferson (1892-99). Vol 4, 439.

Every natural scientist who thinks with any degree of consistency at all will, I think, come to the view that all those capacities that we understand by the phrase psychic activities (Seelenthiitigkeiten) are but functions of the brain substance; or, to express myself a bit crudely here, that thoughts stand in the same relation to the brain as gall does to the liver or urine to the kidneys. To assume a soul that makes use of the brain as an instrument with which it can work as it pleases is pure nonsense; we would then be forced to assume a special soul for every function of the body as well.

In Physiologische Briefe für Gelbildete aIle Stünde (1845-1847), 3 parts, 206. as translated in Frederick Gregory, Scientific Materialism in Nineteenth Century Germany (1977), 64.

Every scientist is an agent of cultural change. He may not be a champion of change; he may even resist it, as scholars of the past resisted the new truths of historical geology, biological evolution, unitary chemistry, and non-Euclidean geometry. But to the extent that he is a true professional, the scientist is inescapably an agent of change. His tools are the instruments of change—skepticism, the challenge to establish authority, criticism, rationality, and individuality.

In Science in Russian Culture: A History to 1860 (1963).

For just as musical instruments are brought to perfection of clearness in the sound of their strings by means of bronze plates or horn sounding boards, so the ancients devised methods of increasing the power of the voice in theaters through the application of the science of harmony.

In Vitruvius Pollio and Morris Hicky Morgan (trans.), 'Book V: Chapter III', Vitruvius, the Ten Books on Architecture (1914), 139. From the original Latin, “Ergo veteres Architecti, naturae vestigia persecuti, indagationibus vocis scandentes theatrorum perfecerunt gradationes: & quaesiuerunt per canonicam mathematicorum,& musicam rationem, ut quaecunq; vox effet in scena, clarior & suauior ad spectatorum perueniret aures. Uti enim organa in aeneis laminis, aut corneis, diesi ad chordarum sonituum claritatem perficiuntur: sic theatrorum, per harmonicen ad augendam vocem, ratiocinationes ab antiquis sunt constitutae.” In De Architectura libri decem (1552), 175.

For the Members of the Assembly having before their eyes so many fatal Instances of the errors and falshoods, in which the greatest part of mankind has so long wandred, because they rely'd upon the strength of humane Reason alone, have begun anew to correct all Hypotheses by sense, as Seamen do their dead Reckonings by Cœlestial Observations; and to this purpose it has been their principal indeavour to enlarge and strengthen the Senses by Medicine, and by such outward Instruments as are proper for their particular works.

Micrographia, or some Physiological Descriptions of Minute Bodies made by Magnifying Glasses with Observations and Inquiries thereupon (1665), preface sig.

For those [observations] that I made in Leipzig in my youth and up to my 21st year, I usually call childish and of doubtful value. Those that I took later until my 28th year [i.e., until 1574] I call juvenile and fairly serviceable. The third group, however, which I made at Uraniborg during approximately the last 21 years with the greatest care and with very accurate instruments at a more mature age, until I was fifty years of age, those I call the observations of my manhood, completely valid and absolutely certain, and this is my opinion of them.

In H. Raeder, E. and B. Stromgren (eds. and trans.), Tycho Brahe’s Description of his Instruments and Scientific Work: as given in Astronomiae Instauratae Mechanica, Wandesburgi 1598 (1946), 110.

Fourier’s Theorem … is not only one of the most beautiful results of modern analysis, but it may be said to furnish an indispensable instrument in the treatment of nearly every recondite question in modern physics. To mention only sonorous vibrations, the propagation of electric signals along a telegraph wire, and the conduction of heat by the earth’s crust, as subjects in their generality intractable without it, is to give but a feeble idea of its importance.

In William Thomson and Peter Guthrie Tait, Treatise on Natural Philosophy (1867), Vol. 1, 28.

From the point of view of the pure morphologist the recapitulation theory is an instrument of research enabling him to reconstruct probable lines of descent; from the standpoint of the student of development and heredity the fact of recapitulation is a difficult problem whose solution would perhaps give the key to a true understanding of the real nature of heredity.

Form and Function: A Contribution to the History of Animal Morphology (1916), 312-3.

Furnished as all Europe now is with Academies of Science, with nice instruments and the spirit of experiment, the progress of human knowledge will be rapid and discoveries made of which we have at present no conception. I begin to be almost sorry I was born so soon, since I cannot have the happiness of knowing what will be known a hundred years hence.

…...

God having designed man for a sociable creature, furnished him with language, which was to be the great instrument and tie of society.

In An Essay Concerning Human Understanding (1849), Book 3, Chap 1, Sec. 1, 288.

Governments and parliaments must find that astronomy is one of the sciences which cost most dear: the least instrument costs hundreds of thousands of dollars, the least observatory costs millions; each eclipse carries with it supplementary appropriations. And all that for stars which are so far away, which are complete strangers to our electoral contests, and in all probability will never take any part in them. It must be that our politicians have retained a remnant of idealism, a vague instinct for what is grand; truly, I think they have been calumniated; they should be encouraged and shown that this instinct does not deceive them, that they are not dupes of that idealism.

In Henri Poincaré and George Bruce Halsted (trans.), The Value of Science: Essential Writings of Henri Poincare (1907), 84.

Had that wordy vacuum skull thought what this would do to every critical figure in science and engineering? … Throw away every book, table, instrument, and start over? I know that some of my ancestors did that in switching from old English units to MKS—but they did it to make things easier.

In The Moon Is a Harsh Mistress (1966), 160.

He made an instrument to know If the moon shine at full or no;

That would, as soon as e’er she shone straight,

Whether ‘twere day or night demonstrate;

Tell what her d’ameter to an inch is,

And prove that she’s not made of green cheese.

That would, as soon as e’er she shone straight,

Whether ‘twere day or night demonstrate;

Tell what her d’ameter to an inch is,

And prove that she’s not made of green cheese.

He should avail himself of their resources in such ways as to advance the expression of the spirit in the life of mankind. He should use them so as to afford to every human being the greatest possible opportunity for developing and expressing his distinctively human capacity as an instrument of the spirit, as a centre of sensitive and intelligent awareness of the objective universe, as a centre of love of all lovely things, and of creative action for the spirit.

…...

I am not yet so lost in lexicography, as to forget that words are the daughters of the earth, and that things are the sons of heaven. Language is only the instrument of science, and words are but the signs of ideas: I wish, however, that the instrument might be less apt to decay, and that signs might be permanent, like the things which they denote.

'Preface', A Dictionary of the English Language (1755), Vol. 1.

I can certainly wish for new, large, and properly constructed instruments, and enough of them, but to state where and by what means they are to be procured, this I cannot do. Tycho Brahe has given Mastlin an instrument of metal as a present, which would be very useful if Mastlin could afford the cost of transporting it from the Baltic, and if he could hope that it would travel such a long way undamaged… . One can really ask for nothing better for the observation of the sun than an opening in a tower and a protected place underneath.

As quoted in James Bruce Ross and Mary Martin McLaughlin, The Portable Renaissance Reader (1968), 605.

I can see him now at the blackboard, chalk in one hand and rubber in the other, writing rapidly and erasing recklessly, pausing every few minutes to face the class and comment earnestly, perhaps on the results of an elaborate calculation, perhaps on the greatness of the Creator, perhaps on the beauty and grandeur of Mathematics, always with a capital M. To him mathematics was not the handmaid of philosophy. It was not a humanly devised instrument of investigation, it was Philosophy itself, the divine revealer of TRUTH.

Writing as a Professor Emeritus at Harvard University, a former student of Peirce, in 'Benjamin Peirce: II. Reminiscences', The American Mathematical Monthly (Jan 1925), 32, No. 1, 5.

I have accumulated a wealth of knowledge in innumerable spheres and enjoyed it as an always ready instrument for exercising the mind and penetrating further and further. Best of all, mine has been a life of loving and being loved. What a tragedy that all this will disappear with the used-up body!

In and Out of the Ivory Tower (1960), 311.

I have been branded with folly and madness for attempting what the world calls impossibilities, and even from the great engineer, the late James Watt, who said ... that I deserved hanging for bringing into use the high-pressure engine. This has so far been my reward from the public; but should this be all, I shall be satisfied by the great secret pleasure and laudable pride that I feel in my own breast from having been the instrument of bringing forward new principles and new arrangements of boundless value to my country, and however much I may be straitened in pecuniary circumstances, the great honour of being a useful subject can never be taken from me, which far exceeds riches.

From letter to Davies Gilbert, written a few months before Trevithick's last illness. Quoted in Francis Trevithick, Life of Richard Trevithick: With an Account of his Inventions (1872), Vol. 2, 395-6.

I have tried to improve telescopes and practiced continually to see with them. These instruments have play'd me so many tricks that I have at last found them out in many of their humours.

Quoted in Constance Anne Lubbock, The Herschel Chronicle: the Life-story of William Herschel and his Sister, Caroline Herschel (1933), 102.

I shall collect plants and fossils, and with the best of instruments make astronomic observations. Yet this is not the main purpose of my journey. I shall endeavor to find out how nature's forces act upon one another, and in what manner the geographic environment exerts its influence on animals and plants. In short, I must find out about the harmony in nature.

Letter to Karl Freiesleben (Jun 1799). In Helmut de Terra, Humboldt: The Life and Times of Alexander van Humboldt 1769-1859 (1955), 87.

I specifically paused to show that, if there were such machines with the organs and shape of a monkey or of some other non-rational animal, we would have no way of discovering that they are not the same as these animals. But if there were machines that resembled our bodies and if they imitated our actions as much as is morally possible, we would always have two very certain means for recognizing that, none the less, they are not genuinely human. The first is that they would never be able to use speech, or other signs composed by themselves, as we do to express our thoughts to others. For one could easily conceive of a machine that is made in such a way that it utters words, and even that it would utter some words in response to physical actions that cause a change in its organs—for example, if someone touched it in a particular place, it would ask what one wishes to say to it, or if it were touched somewhere else, it would cry out that it was being hurt, and so on. But it could not arrange words in different ways to reply to the meaning of everything that is said in its presence, as even the most unintelligent human beings can do. The second means is that, even if they did many things as well as or, possibly, better than anyone of us, they would infallibly fail in others. Thus one would discover that they did not act on the basis of knowledge, but merely as a result of the disposition of their organs. For whereas reason is a universal instrument that can be used in all kinds of situations, these organs need a specific disposition for every particular action.

Discourse on Method in Discourse on Method and Related Writings (1637), trans. Desmond M. Clarke, Penguin edition (1999), Part 5, 40.

I think that the event which, more than anything else, led me to the search for ways of making more powerful radio telescopes, was the recognition, in 1952, that the intense source in the constellation of Cygnus was a distant galaxy—1000 million light years away. This discovery showed that some galaxies were capable of producing radio emission about a million times more intense than that from our own Galaxy or the Andromeda nebula, and the mechanisms responsible were quite unknown. ... [T]he possibilities were so exciting even in 1952 that my colleagues and I set about the task of designing instruments capable of extending the observations to weaker and weaker sources, and of exploring their internal structure.

From Nobel Lecture (12 Dec 1974). In Stig Lundqvist (ed.), Nobel Lectures, Physics 1971-1980 (1992), 187.

I will give no deadly medicine to any one if asked, nor suggest any such counsel; and in like manner I will not give any woman the instrument to procure abortion. … I will not cut a person who is suffering with stone, but will leave this to be done by men who are practitioners of such work.

From 'The Oath', as translated by Francis Adams in The Genuine Works of Hippocrates (1849), Vol. 2, 780.

I would by all means have men beware, lest Æsop’s pretty fable of the fly that sate [sic] on the pole of a chariot at the Olympic races and said, “What a dust do I raise,” be verified in them. For so it is that some small observation, and that disturbed sometimes by the instrument, sometimes by the eye, sometimes by the calculation, and which may be owing to some real change in the heaven, raises new heavens and new spheres and circles.

'Of Vain Glory' (1625) in James Spedding, Robert Ellis and Douglas Heath (eds.), The Works of Francis Bacon (1887-1901), Vol. 6, 503.

If a mathematician of the past, an Archimedes or even a Descartes, could view the field of geometry in its present condition, the first feature to impress him would be its lack of concreteness. There are whole classes of geometric theories which proceed not only without models and diagrams, but without the slightest (apparent) use of spatial intuition. In the main this is due, to the power of the analytic instruments of investigations as compared with the purely geometric.

In 'The Present Problems in Geometry', Bulletin American Mathematical Society (1906), 286.

If any person thinks the examination of the rest of the animal kingdom an unworthy task, he must hold in like disesteem the study of man. For no one can look at the primordia of the human frame—blood, flesh, bones, vessels, and the like—without much repugnance. Moreover, in every inquiry, the examination of material elements and instruments is not to be regarded as final, but as ancillary to the conception of the total form. Thus, the true object of architecture is not bricks, mortar or timber, but the house; and so the principal object of natural philosophy is not the material elements, but their composition, and the totality of the form to which they are subservient, and independently of which they have no existence.

On Parts of Animals, Book 1, Chap 5, 645a, 26-36. In W. Ogle (trans.), Aristotle on the Parts of Animals (1882), 17. Alternate translations: “primodia” = “elements”; “Moreover ... Thus” = “Moreover, when anyone of the parts or structures, be it which it may, is under discussion, it must not be supposed that it is its material composition to which attention is being directed or which is the object of the discussion, but rather the total form. Similarly”; “form ... subservient, and” = “totality of the substance.” See alternate translation in Jonathan Barnes (ed.), The Complete Works of Aristotle (1984), Vol. 1, 1004.

If logical training is to consist, not in repeating barbarous scholastic formulas or mechanically tacking together empty majors and minors, but in acquiring dexterity in the use of trustworthy methods of advancing from the known to the unknown, then mathematical investigation must ever remain one of its most indispensable instruments. Once inured to the habit of accurately imagining abstract relations, recognizing the true value of symbolic conceptions, and familiarized with a fixed standard of proof, the mind is equipped for the consideration of quite other objects than lines and angles. The twin treatises of Adam Smith on social science, wherein, by deducing all human phenomena first from the unchecked action of selfishness and then from the unchecked action of sympathy, he arrives at mutually-limiting conclusions of transcendent practical importance, furnish for all time a brilliant illustration of the value of mathematical methods and mathematical discipline.

In 'University Reform', Darwinism and Other Essays (1893), 297-298.

If Nicolaus Copernicus, the distinguished and incomparable master, in this work had not been deprived of exquisite and faultless instruments, he would have left us this science far more well-established. For he, if anybody, was outstanding and had the most perfect understanding of the geometrical and arithmetical requisites for building up this discipline. Nor was he in any respect inferior to Ptolemy; on the contrary, he surpassed him greatly in certain fields, particularly as far as the device of fitness and compendious harmony in hypotheses is concerned. And his apparently absurd opinion that the Earth revolves does not obstruct this estimate, because a circular motion designed to go on uniformly about another point than the very center of the circle, as actually found in the Ptolemaic hypotheses of all the planets except that of the Sun, offends against the very basic principles of our discipline in a far more absurd and intolerable way than does the attributing to the Earth one motion or another which, being a natural motion, turns out to be imperceptible. There does not at all arise from this assumption so many unsuitable consequences as most people think.

From Letter (20 Jan 1587) to Christopher Rothman, chief astronomer of the Landgrave of Hesse. Webmaster seeks more information to better cite this source — please contact if you can furnish more. Webmaster originally found this quote introduced by an uncredited anonymous commentary explaining the context: “It was not just the Church that resisted the heliocentrism of Copernicus. Many prominent figures, in the decades following the 1543 publication of De Revolutionibus, regarded the Copernican model of the universe as a mathematical artifice which, though it yielded astronomical predictions of superior accuracy, could not be considered a true representation of physical reality.”

In physical science in most cases a new discovery means that by some new idea, new instrument, or some new and better use of an old one, Nature has been wooed in some new way.

In 'The History of a Star', The Nineteenth Century (Nov 1889), 26, No. 153, 785.

In the beginning of the year 1800 the illustrious professor [Volta] conceived the idea of forming a long column by piling up, in succession, a disc of copper, a disc of zinc, and a disc of wet cloth, with scrupulous attention to not changing this order. What could be expected beforehand from such a combination? Well, I do not hesitate to say, this apparently inert mass, this bizarre assembly, this pile of so many couples of unequal metals separated by a little liquid is, in the singularity of effect, the most marvellous instrument which men have yet invented, the telescope and the steam engine not excepted.

In François Arago, 'Bloge for Volta' (1831), Oeuvres Completes de François Arago (1854), Vol. 1, 219-20.

In war, science has proven itself an evil genius; it has made war more terrible than it ever was before. Man used to be content to slaughter his fellowmen on a single plane—the earth’s surface. Science has taught him to go down into the water and shoot up from below and to go up into the clouds and shoot down from above, thus making the battlefield three times as bloody as it was before; but science does not teach brotherly love. Science has made war so hellish that civilization was about to commit suicide; and now we are told that newly discovered instruments of destruction will make the cruelties of the late war seem trivial in comparison with the cruelties of wars that may come in the future.

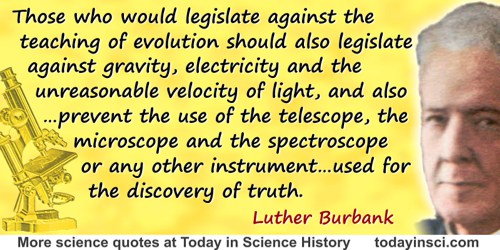

Proposed summation written for the Scopes Monkey Trial (1925), in Genevieve Forbes Herrick and John Origen Herrick, The Life of William Jennings Bryan (1925), 405. This speech was prepared for delivery at the trial, but was never heard there, as both sides mutually agreed to forego arguments to the jury.

Indeed, the aim of teaching [mathematics] should be rather to strengthen his [the pupil’s] faculties, and to supply a method of reasoning applicable to other subjects, than to furnish him with an instrument for solving practical problems.

In John Perry (ed.), Discussion on the Teaching of Mathematics (1901), 84. The discussion took place on 14 Sep 1901 at the British Association at Glasgow, during a joint meeting of the mathematics and physics sections with the education section. The proceedings began with an address by John Perry. Magnus spoke in the Discussion that followed.

It is both a sad and a happy fact of engineering history that disasters have been powerful instruments of change. Designers learn from failure. Industrial society did not invent grand works of engineering, and it was not the first to know design failure. What it did do was develop powerful techniques for learning from the experience of past disasters. It is extremely rare today for an apartment house in North America, Europe, or Japan to fall down. Ancient Rome had large apartment buildings too, but while its public baths, bridges and aqueducts have lasted for two thousand years, its big residential blocks collapsed with appalling regularity. Not one is left in modern Rome, even as ruin.

In Why Things Bite Back: Technology and the Revenge of Unintended Consequences (1997), 23.

It is the old experience that a rude instrument in the hand of a master craftsman will achieve more than the finest tool wielded by the uninspired journeyman.

Quoted in The Life, Letters and Labours of Francis Galton (1930), Vol. 3A, 50.

It were indeed to be wish’d that our art had been less ingenious, in contriving means destructive to mankind; we mean those instruments of war, which were unknown to the ancients, and have made such havoc among the moderns. But as men have always been bent on seeking each other’s destruction by continual wars; and as force, when brought against us, can only be repelled by force; the chief support of war, must, after money, be now sought in chemistry.

A New Method of Chemistry, 3rd edition (1753), Vol. I, trans. P. Shaw, 189-90.

Knowledge is like a knife. In the hands of a well-balanced adult it is an instrument for good of inestimable value; but in the hands of a child, an idiot, a criminal, a drunkard or an insane man, it may cause havoc, misery, suffering and crime. Science and religion have this in common, that their noble aims, their power for good, have often, with wrong men, deteriorated into a boomerang to the human race.

In 'Applied Chemistry', Science (22 Oct 1915), New Series, 42, No. 1086, 548.

Language is only the instrument of science, and words are but the signs of ideas.

In 'Preface to the English Dictionary', The Works of Samuel Johnson (1810), Vol. 2, 37.

Language is the principal tool with which we communicate; but when words are used carelessly or mistakenly, what was intended to advance mutual understanding may in fact hinder it; our instrument becomes our burden

Irving M. Copi and Carl Cohen (probably? in their Introduction to Logic), In K. Srinagesh, The Principles of Experimental Research (2006), 15.

Like buried treasures, the outposts of the universe have beckoned to the adventurous from immemorial times. Princes and potentates, political or industrial, equally with men of science, have felt the lure of the uncharted seas of space, and through their provision of instrumental means the sphere of exploration has made new discoveries and brought back permanent additions to our knowledge of the heavens.

From article by Hale in Harper's Magazine, 156, (1928), 639-646, in which he urged building a 200-inch optical telescope. Cited in Kenneth R. Lang, Parting the Cosmic Veil (2006), 82 and 210. Also in George Ellery Hale, Signals From the Stars (1931), 1.

Long ago it was said: If Tycho had had instruments ten times as precise, we would never have had a Kepler, or a Newton, or Astronomy.

In La Science et l’Hypothèse (1901, 1908), 211, as translated in Henri Poincaré and William John Greenstreet (trans.), Science and Hypothesis (1902, 1905), 181. From the original French, “Il y a longtemps qu’on l’a dit: Si Tycho avait eu des instruments dix fois plus précis, il n’y aurait jamais eu ni Képler, ni Newton, ni Astronomie.”

Many of the nobles and senators, although of great age, mounted more than once to the top of the highest church in Venice, in order to see sails and shipping … so far off that it was two hours before they were seen without my spy-glass …, for the effect of my instrument is such that it makes an object fifty miles off appear as large as if it were only five miles away. ... The Senate, knowing the way in which I had served it for seventeen years at Padua, ... ordered my election to the professorship for life.

Quoted in Will Durant, Ariel Duran, The Age of Reason Begins (1961), 604. From Charles Singer, Studies in the History and Method of Science (1917), Vol. 1, 228.

Many times every day I think of taking off in that missile. I’ve tried a thousand times to visualize that moment, to anticipate how I’ll feel if I’m first, which I very much want to be. But whether I go first or go later. I approach it now with some awe, and I’m sure I’ll approach it with even more awe on my day. In spite of the fact that I will he very busy getting set and keeping tabs on all the instruments, there’s no question that I’ll need—and will have—all my confidence.

As he wrote in an article for Life (14 Sep 1959), 38.

Mathematicians attach great importance to the elegance of their methods and their results. This is not pure dilettantism. What is it indeed that gives us the feeling of elegance in a solution, in a demonstration? It is the harmony of the diverse parts, their symmetry, their happy balance; in a word it is all that introduces order, all that gives unity, that permits us to see clearly and to comprehend at once both the ensemble and the details. But this is exactly what yields great results, in fact the more we see this aggregate clearly and at a single glance, the better we perceive its analogies with other neighboring objects, consequently the more chances we have of divining the possible generalizations. Elegance may produce the feeling of the unforeseen by the unexpected meeting of objects we are not accustomed to bring together; there again it is fruitful, since it thus unveils for us kinships before unrecognized. It is fruitful even when it results only from the contrast between the simplicity of the means and the complexity of the problem set; it makes us then think of the reason for this contrast and very often makes us see that chance is not the reason; that it is to be found in some unexpected law. In a word, the feeling of mathematical elegance is only the satisfaction due to any adaptation of the solution to the needs of our mind, and it is because of this very adaptation that this solution can be for us an instrument. Consequently this esthetic satisfaction is bound up with the economy of thought.

In 'The Future of Mathematics', Monist, 20, 80. Translated from the French by George Bruce Halsted.

Mathematics gives the young man a clear idea of demonstration and habituates him to form long trains of thought and reasoning methodically connected and sustained by the final certainty of the result; and it has the further advantage, from a purely moral point of view, of inspiring an absolute and fanatical respect for truth. In addition to all this, mathematics, and chiefly algebra and infinitesimal calculus, excite to a high degree the conception of the signs and symbols—necessary instruments to extend the power and reach of the human mind by summarizing an aggregate of relations in a condensed form and in a kind of mechanical way. These auxiliaries are of special value in mathematics because they are there adequate to their definitions, a characteristic which they do not possess to the same degree in the physical and mathematical [natural?] sciences.

There are, in fact, a mass of mental and moral faculties that can be put in full play only by instruction in mathematics; and they would be made still more available if the teaching was directed so as to leave free play to the personal work of the student.

There are, in fact, a mass of mental and moral faculties that can be put in full play only by instruction in mathematics; and they would be made still more available if the teaching was directed so as to leave free play to the personal work of the student.

In 'Science as an Instrument of Education', Popular Science Monthly (1897), 253.

Mathematics in its pure form, as arithmetic, algebra, geometry, and the applications of the analytic method, as well as mathematics applied to matter and force, or statics and dynamics, furnishes the peculiar study that gives to us, whether as children or as men, the command of nature in this its quantitative aspect; mathematics furnishes the instrument, the tool of thought, which we wield in this realm.

In Psychologic Foundations of Education (1898), 325.

Men can construct a science with very few instruments, or with very plain instruments; but no one on earth could construct a science with unreliable instruments. A man might work out the whole of mathematics with a handful of pebbles, but not with a handful of clay which was always falling apart into new fragments, and falling together into new combinations. A man might measure heaven and earth with a reed, but not with a growing reed.

Heretics (1905), 146-7.

Metals are the great agents by which we can examine the recesses of nature; and their uses are so multiplied, that they have become of the greatest importance in every occupation of life. They are the instruments of all our improvements, of civilization itself, and are even subservient to the progress of the human mind towards perfection. They differ so much from each other, that nature seems to have had in view all the necessities of man, in order that she might suit every possible purpose his ingenuity can invent or his wants require.

From 'Classification of Simple Bodies', The Artist & Tradesman’s Guide: Embracing Some Leading Facts & Principles of Science, and a Variety of Matter Adapted to the Wants of the Artist, Mechanic, Manufacturer, and Mercantile Community (1827), 16.

Modern philosophers, to avoid circumlocutions, call that instrument, wherein a cylinder of quicksilver, of between 28 to 32 inches in altitude, is kept suspended after the manner of the Torricellian experiment, a barometer or baroscope.

Opening sentence of 'A Relation of Some Mercurial Observations, and Their Results', Philosophical Transactions of the Royal Society (12 Feb 1665/6), 1, No. 9, 153. Since no author is identified, it is here assumed that the article was written by the journal (founding) editor, Oldenburg.

My Volta is always busy. What an industrious scholar he is! When he is not paying visits to museums or learned men, he devotes himself to experiments. He touches, investigates, reflects, takes notes on everything. I regret to say that everywhere, inside the coach as on any desk, I am faced with his handkerchief, which he uses to wipe indifferently his hands, nose and instruments.

As translated and quoted in Giuliano Pancaldi, Volta: Science and Culture in the Age of Enlightenment (2005), 154.

Neither the naked hand nor the understanding left to itself can effect much. It is by instruments and helps that the work is done, which are as much wanted for the understanding as for the hand. And as the instruments of the hand either give motion or guide it, so the instruments of the mind supply either suggestions for the understanding or cautions.

From Novum Organum (1620), Book 1, Aphorism 2. Translated as The New Organon: Aphorisms Concerning the Interpretation of Nature and the Kingdom of Man), collected in James Spedding, Robert Ellis and Douglas Heath (eds.), The Works of Francis Bacon (1857), Vol. 4, 47.

Nothing afflicted Marcellus so much as the death of Archimedes, who was then, as fate would have it, intent upon working out some problem by a diagram, and having fixed his mind alike and his eyes upon the subject of his speculation, he never noticed the incursion of the Romans, nor that the city was taken. In this transport of study and contemplation, a soldier, unexpectedly coming up to him, commanded him to follow to Marcellus, which he declined to do before he had worked out his problem to a demonstration; the soldier, enraged, drew his sword and ran him through. Others write, that a Roman soldier, running upon him with a drawn sword, offered to kill him; and that Archimedes, looking back, earnestly besought him to hold his hand a little while, that he might not leave what he was at work upon inconclusive and imperfect; but the soldier, nothing moved by his entreaty, instantly killed him. Others again relate, that as Archimedes was carrying to Marcellus mathematical instruments, dials, spheres, and angles, by which the magnitude of the sun might be measured to the sight, some soldiers seeing him, and thinking that he carried gold in a vessel, slew him. Certain it is, that his death was very afflicting to Marcellus; and that Marcellus ever after regarded him that killed him as a murderer; and that he sought for his kindred and honoured them with signal favours.

— Plutarch

In John Dryden (trans.), Life of Marcellus.

Nothing afflicted Marcellus so much as the death of Archimedes, who was then, as fate would have it, intent upon working out some problem by a diagram, and having fixed his mind alike and his eyes upon the subject of his speculation, he never noticed the incursion of the Romans, nor that the city was taken. In this transport of study and contemplation, a soldier, unexpectedly coming up to him, commanded him to follow to Marcellus, which he declined to do before he had worked out his problem to a demonstration; the soldier, enraged, drew his sword and ran him through. Others write, that a Roman soldier, running upon him with a drawn sword, offered to kill him; and that Archimedes, looking back, earnestly besought him to hold his hand a little while, that he might not leave what he was at work upon inconclusive and imperfect; but the soldier, nothing moved by his entreaty, instantly killed him. Others again relate, that as Archimedes was carrying to Marcellus mathematical instruments, dials, spheres, and angles, by which the magnitude of the sun might be measured to the sight, some soldiers seeing him, and thinking that he carried gold in a vessel, slew him. Certain it is, that his death was very afflicting to Marcellus; and that Marcellus ever after regarded him that killed him as a murderer; and that he sought for his kindred and honoured them with signal favours.

— Plutarch

In John Dryden (trans.), Life of Marcellus.

Nothing tends so much to the advancement of knowledge as the application of a new instrument. The native intellectual powers of men in different times are not so much the causes of the different success of their labors, as the peculiar nature of the means and artificial resources in their possession.

In Elements of Chemical Philosophy (1812), Vol. 1, Part 1, 28.

Now I should like to ask you for an observation; since I possess no instruments, I must appeal to others.

As quoted in James Bruce Ross and Mary Martin McLaughlin, The Portable Renaissance Reader (1968), 600.

Number is therefore the most primitive instrument of bringing an unconscious awareness of order into consciousness.

In Creation Myths (1995), 326.

O telescope, instrument of knowledge, more precious than any sceptre.

Letter to Galileo (1610). Quoted in Timothy Ferris, Coming of Age in the Milky Way (2003), 95.

Of our three principal instruments for interrogating Nature,—observation, experiment, and comparison,—the second plays in biology a quite subordinate part. But while, on the one hand, the extreme complication of causes involved in vital processes renders the application of experiment altogether precarious in its results, on the other hand, the endless variety of organic phenomena offers peculiar facilities for the successful employment of comparison and analogy.

In 'University Reform', Darwinism and Other Essays (1893), 302.

Our ideas are only intellectual instruments which we use to break into phenomena; we must change them when they have served their purpose, as we change a blunt lancet that we have used long enough.

In An Introduction to the Study of Experimental Medicine (1865).

Physical investigation, more than anything besides, helps to teach us the actual value and right use of the Imagination—of that wondrous faculty, which, left to ramble uncontrolled, leads us astray into a wilderness of perplexities and errors, a land of mists and shadows; but which, properly controlled by experience and reflection, becomes the noblest attribute of man; the source of poetic genius, the instrument of discovery in Science, without the aid of which Newton would never have invented fluxions, nor Davy have decomposed the earths and alkalies, nor would Columbus have found another Continent.

Presidential Address to Anniversary meeting of the Royal Society (30 Nov 1859), Proceedings of the Royal Society of London (1860), 10, 165.

Portable communication instruments will be developed that will enable an individual to communicate directly and promptly with anyone, anywhere in the world. As we learn more about the secrets of space, we shall increase immeasurably the number of usable frequencies until we are able to assign a separate frequency to an individual as a separate telephone number is assigned to each instrument.

In address (Fall 1946) at a dinner in New York to commemorate the 40 years of Sarnoff’s service in the radio field, 'Institute News and Radio Notes: The Past and Future of Radio', Proceedings of the Institute of Radio Engineers (I.R.E.), (May 1947), 35, No. 5, 498. [In 1946, foretelling the cell phone and a hint of the communications satellite? —Webmaster]

Prayer is not an old woman’s idle amusement. Properly understood and applied, it is the most potent instrument of action.

Quoted in Kim Lim (ed.), 1,001 Pearls of Spiritual Wisdom: Words to Enrich, Inspire, and Guide Your Life (2014), 178

Reason is man’s faculty for grasping the world by thought, in contradiction to intelligence, which is man’s ability to manipulate the world with the help of thought. Reason is man’s instrument for arriving at the truth, intelligence is man's instrument for manipulating the world more successfully; the former is essentially human, the latter belongs to the animal part of man.

In The Sane Society (1955), 64.

Science has to be understood in its broadest sense, as a method for apprehending all observable reality, and not merely as an instrument for acquiring specialized knowledge.

In Alfred Armand Montapert, Words of Wisdom to Live By: An Encyclopedia of Wisdom in Condensed Form (1986), 217, without citation. If you know the primary source, please contact Webmaster.

Science is far from a perfect instrument of knowledge. It’s just the best one we have. In this respect, as in many others, it’s like democracy.

In 'With Science on Our Side', Washington Post (9 Jan 1994).

Science is in a literal sense constructive of new facts. It has no fixed body of facts passively awaiting explanation, for successful theories allow the construction of new instruments—electron microscopes and deep space probes—and the exploration of phenomena that were beyond description—the behavior of transistors, recombinant DNA, and elementary particles, for example. This is a key point in the progressive nature of science—not only are there more elegant or accurate analyses of phenomena already known, but there is also extension of the range of phenomena that exist to be described and explained.

Co-author with Michael A. Arbib, English-born professor of computer science and biomedical engineering (1940-)

Co-author with Michael A. Arbib, English-born professor of computer science and biomedical engineering (1940-)

Michael A. Arbib and Mary B. Hesse, The Construction of Reality (1986), 8.

Science is not ... a perfect instrument, but it is a superb and invaluable tool that works harm only when taken as an end in itself.

Commentary on The Secret of the Golden Flower

Science, unguided by a higher abstract principle, freely hands over its secrets to a vastly developed and commercially inspired technology, and the latter, even less restrained by a supreme culture saving principle, with the means of science creates all the instruments of power demanded from it by the organization of Might.

In the Shadow of Tomorrow, ch. 9 (1936).

Scientific research was much like prospecting: you went out and you hunted, armed with your maps and instruments, but in the ened your preparations did not matter, or even your intuition. You needed your luck, and whatever benefits accrued to the diligent, through sheer, grinding hard work.

The Andromeda Strain (1969)

Scientific wealth tends to accumulate according to the law of compound interest. Every addition to knowledge of the properties of matter supplies the physical scientist with new instrumental means for discovering and interpreting phenomena of nature, which in their turn afford foundations of fresh generalisations, bringing gains of permanent value into the great storehouse of natural philosophy.

From Inaugural Address of the President to British Association for the Advancement of Science, Edinburgh (2 Aug 1871). Printed in The Chemical News (4 Aug 1871), 24, No. 610., 53.

Technology and production can be great benefactors of man, but they are mindless instruments, and if undirected they careen along with a momentum of their own. In our country, they pulverize everything in their path—the landscape, the natural environment,

The Greening of America (1970).

The aim of scientific thought, then, is to apply past experience to new circumstances; the instrument is an observed uniformity in the course of events. By the use of this instrument it gives us information transcending our experience, it enables us to infer things that we have not seen from things that we have seen; and the evidence for the truth of that information depends on our supposing that the uniformity holds good beyond our experience.

'On the Aims and Instruments of Scientific Thought,' a Lecture delivered before the members of the British Association, at Brighton, on 19 Aug 1872, in Leslie Stephen and Frederick Pollock (eds.), Lectures and Essays, by the Late William Kingdon Clifford (1886), 90.

The ancients thought as clearly as we do, had greater skills in the arts and in architecture, but they had never learned the use of the great instrument which has given man control over nature—experiment.

Address at the opening of the new Pathological Institute of the Royal Infirmary, Glasgow (4 Oct 1911). Printed in 'The Pathological Institute of a General Hospital', Glasgow Medical Journal (1911), 76, 327.

The chemists work with inaccurate and poor measuring services, but they employ very good materials. The physicists, on the other hand, use excellent methods and accurate instruments, but they apply these to very inferior materials. The physical chemists combine both these characteristics in that they apply imprecise methods to impure materials.

Quoted in Ralph Oesper, The Human Side of Scientists (1975), 116.

The chief instrument of American statistics is the census, which should accomplish a two-fold object. It should serve the country by making a full and accurate exhibit of the elements of national life and strength, and it should serve the science of statistics by so exhibiting general results that they may be compared with similar data obtained by other nations.

Speech (16 Dec 1867) given while a member of the U.S. House of Representatives, introducing resolution for the appointment of a committee to examine the necessities for legislation upon the subject of the ninth census to be taken the following year. Quoted in John Clark Ridpath, The Life and Work of James A. Garfield (1881), 219.

The day when the scientist, no matter how devoted, may make significant progress alone and without material help is past. This fact is most self-evident in our work. Instead of an attic with a few test tubes, bits of wire and odds and ends, the attack on the atomic nucleus has required the development and construction of great instruments on an engineering scale.

Nobel Prize banquet speech (29 Feb 1940)

The determination of the relationship and mutual dependence of the facts in particular cases must be the first goal of the Physicist; and for this purpose he requires that an exact measurement may be taken in an equally invariable manner anywhere in the world… Also, the history of electricity yields a well-known truth—that the physicist shirking measurement only plays, different from children only in the nature of his game and the construction of his toys.

In 'Mémoire sur la mesure de force de l'électricité', Journal de Physique (1782), 21, 191. English version by Google Translate tweaked by Webmaster. From the original French, “La determination de la relation & de la dépendance mutuelle de ces données dans certains cas particuliers, doit être le premier but du Physicien; & pour cet effet, il falloit one mesure exacte qui indiquât d’une manière invariable & égale dans tous les lieux de la terre, le degré de l'électricité au moyen duquel les expéiences ont été faites… Aussi, l’histoire de l'électricité prouve une vérité suffisamment reconnue; c’est que le Physicien sans mesure ne fait que jouer, & qu’il ne diffère en cela des enfans, que par la nature de son jeu & la construction de ses jouets.”

The development of abstract methods during the past few years has given mathematics a new and vital principle which furnishes the most powerful instrument for exhibiting the essential unity of all its branches.

In Lectures on Fundamental Concepts of Algebra and Geometry (1911), 225.

The eyes of the world now look into space, to the moon and to the planets beyond, and we have vowed that we shall not see it governed by a hostile flag of conquest, but by a banner of freedom and peace. We have vowed that we shall not see space filled with weapons of mass destruction, but with instruments of knowledge and understanding.

Address at Rice University in Houston (12 Sep 1962). On website of John F. Kennedy Presidential Library and Museum. [This go-to-the-moon speech was largely written by presidential advisor and speechwriter Ted Sorensen.]

The faith of scientists in the power and truth of mathematics is so implicit that their work has gradually become less and less observation, and more and more calculation. The promiscuous collection and tabulation of data have given way to a process of assigning possible meanings, merely supposed real entities, to mathematical terms, working out the logical results, and then staging certain crucial experiments to check the hypothesis against the actual empirical results. But the facts which are accepted by virtue of these tests are not actually observed at all. With the advance of mathematical technique in physics, the tangible results of experiment have become less and less spectacular; on the other hand, their significance has grown in inverse proportion. The men in the laboratory have departed so far from the old forms of experimentation—typified by Galileo's weights and Franklin's kite—that they cannot be said to observe the actual objects of their curiosity at all; instead, they are watching index needles, revolving drums, and sensitive plates. No psychology of 'association' of sense-experiences can relate these data to the objects they signify, for in most cases the objects have never been experienced. Observation has become almost entirely indirect; and readings take the place of genuine witness.

Philosophy in a New Key; A Study in Inverse the Symbolism of Reason, Rite, and Art (1942), 19-20.

The genius of Laplace was a perfect sledge hammer in bursting purely mathematical obstacles; but, like that useful instrument, it gave neither finish nor beauty to the results. In truth, in truism if the reader please, Laplace was neither Lagrange nor Euler, as every student is made to feel. The second is power and symmetry, the third power and simplicity; the first is power without either symmetry or simplicity. But, nevertheless, Laplace never attempted investigation of a subject without leaving upon it the marks of difficulties conquered: sometimes clumsily, sometimes indirectly, always without minuteness of design or arrangement of detail; but still, his end is obtained and the difficulty is conquered.

In 'Review of “Théorie Analytique des Probabilites” par M. le Marquis de Laplace, 3eme edition. Paris. 1820', Dublin Review (1837), 2, 348.

The historian of science may be tempted to claim that when paradigms change, the world itself changes with them. Led by a new paradigm, scientists adopt new instruments and look in new places. even more important, during revolutions, scientists see new and different things when looking with familiar instruments in places they have looked before. It is rather as if the professional community had been suddenly transported to another planet where familiar objects are seen in a different light and are joined by unfamiliar ones as well.

In The Structure of Scientific Revolutions (1962, 2nd ed. 1970). Excerpt 'Revolutions as Changes of World View', in Joseph Margolis and Jacques Catudal, The Quarrel between Invariance and Flux (2001), 35-36.

The hypothesis is the principal intellectual instrument in research.

In The Art of Scientific Investigation (1957), 52.

The influence of electricity in producing decompositions, although of inestimable value as an instrument of discovery in chemical inquiries, can hardly be said to have been applied to the practical purposes of life, until the same powerful genius [Davy] which detected the principle, applied it, by a singular felicity of reasoning, to arrest the corrosion of the copper-sheathing of vessels. … this was regarded as by Laplace as the greatest of Sir Humphry's discoveries.

Reflections on the Decline of Science in England (1830), 16.

The instinct to command others, in its primitive essence, is a carnivorous, altogether bestial and savage instinct. Under the influence of the mental development of man, it takes on a somewhat more ideal form and becomes somewhat ennobled, presenting itself as the instrument of reason and the devoted servant of that abstraction, or political fiction, which is called the public good. But in its essence it remains just as baneful, and it becomes even more so when, with the application of science, it extends its scope and intensifies the power of its action. If there is a devil in history, it is this power principle.

In Mikhail Aleksandrovich Bakunin, Grigorii Petrovich Maksimov, Max Nettlau, The political philosophy of Bakunin (1953), 248.

The known is finite, the unknown infinite; intellectually we stand on an islet in the midst of an illimitable ocean of inexplicability. Our business in every generation is to reclaim a little more land, to add something to the extent and the solidity of our possessions. And even a cursory glance at the history of the biological sciences during the last quarter of a century is sufficient to justify the assertion, that the most potent instrument for the extension of the realm of natural knowledge which has come into men’s hands, since the publication of Newton's ‘Principia’, is Darwin's ‘Origin of Species.’

From concluding remarks to a chapter by Thomas Huxley, 'On the Reception of the ‘Origin of Species’', the last chapter in Charles Darwin and Francis Darwin (ed.), The Life and Letters of Charles Darwin (1887), Vol. 1, 557.

The language of analysis, most perfect of all, being in itself a powerful instrument of discoveries, its notations, especially when they are necessary and happily conceived, are so many germs of new calculi.

From Theorie Analytique des Probabilités (1812), 7. As translated in Robert Édouard Moritz, Memorabilia Mathematica; Or, The Philomath’s Quotation-book (1914), 200. From the original French, “La langue de l’Analyse, la plus parfaite de toutes, étant par elle-même un puissant instrument de découvertes, ses notations, lorsqu’elles sont nécessaires et heureusement imaginées, sont autant de germes de nouveaux calculs.”

The measure of the Moon’s distance involves no principle more abstruse than the measure of the distance of a tree on the opposite bank of a river. The principles of construction of the best Astronomical instruments are as simple and as closely referred to matters of common school-education and familiar experience, as are those of the common globes, the steam-engine, or the turning-lathe; the details are usually less complicated.

In 'Introduction' to Popular Astronomy: A Series of Lectures Delivered at Ipswich (5th ed., 1866), viii.

The metaphysical philosopher from his point of view recognizes mathematics as an instrument of education, which strengthens the power of attention, develops the sense of order and the faculty of construction, and enables the mind to grasp under the simple formulae the quantitative differences of physical phenomena.

In Dialogues of Plato (1897), Vol. 2, 78.

The method of producing these numbers is called a sieve by Eratosthenes, since we take the odd numbers mingled and indiscriminate and we separate out of them by this method of production, as if by some instrument or sieve, the prime and incomposite numbers by themselves, and the secondary and composite numbers by themselves, and we find separately those that are mixed.

Nicomachus, Introduction to Arithmetic, 1.13.2. Quoted in Morris R. Cohen and I. E. Drabkin, A Sourcebook in Greek Science (1948), 19-20.

The mind of man may be compared to a musical instrument with a certain range of notes, beyond which in both directions we have an infinitude of silence. The phenomena of matter and force lie within our intellectual range, and as far as they reach we will at all hazards push our inquiries. But behind, and above, and around all, the real mystery of this universe [Who made it all?] lies unsolved, and, as far as we are concerned, is incapable of solution.

In 'Matter and Force', Fragments of Science for Unscientific People (1871), 93.